DeepSeek V4 Pro is live on 0G Private Computer

Yesterday we shipped 0GM-1.0, our first proprietary AI model. Today the second piece of the Private Computer lineup lands. DeepSeek V4 Pro is live on pc.0g.ai, routed through a TeeTLS-attested transport layer to Alibaba Bailian's hosted deployment of the model. Two models, two trust modes, one endpoint. The Private Computer is now a multi-model platform builders can target without picking a single provider.

The Private Computer now exposes two trust models on the same app-sk-* key flow. 0GM-1.0 is the sovereign tier: our model, our enclave, our GPUs, TeeML-attested inference inside the box. DeepSeek V4 Pro is the verifiable frontier tier: Bailian hosts the inference running DeepSeek's open weights, 0G adds a TeeTLS-attested transport layer so every routing hop is provable on chain. Builders pick by the legal shape of their data.

What's launching

DeepSeek V4 Pro is reachable today through Router Mode (pc.0g.ai/) and Advanced Mode (pc.0g.ai/sdk/playground/chat?model=deepseek-v4-pro). Same key flow as 0GM-1.0; the API surface is the 0G Router at https://router-api.0g.ai/v1, a single public endpoint that normalizes requests and responses across providers.

| Specification | Value |

|---|---|

| Architecture | Mixture of Experts |

| Total parameters | 1.6T |

| Active per token | 49B |

| Context window | 1M tokens |

| Max output | 384K tokens |

| Reasoning modes | Low / medium / max effort levels (verified live; reasoning_effort: max triples reasoning tokens vs default) |

| Modality | Text + image input (per HuggingFace model card) |

| Tool use | OpenAI tools schema (per HuggingFace model card) |

| License | Open weights on HuggingFace |

| Hosted inference | Alibaba Bailian enterprise API |

| 0G verification | TeeTLS-attested routing (transport layer) |

Source: HuggingFace model card, May 2026.

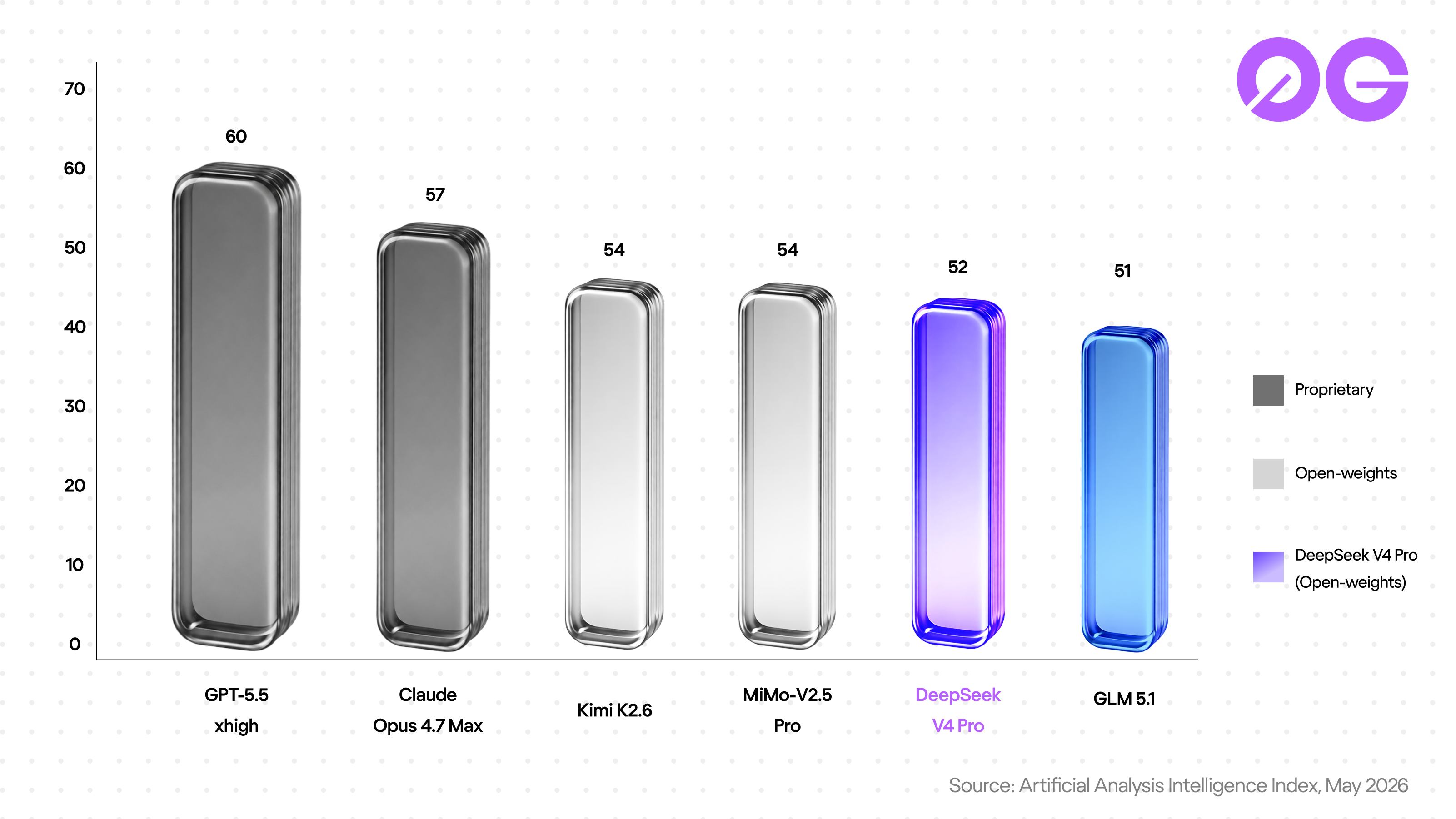

On the Artificial Analysis Intelligence Index for May 2026, DeepSeek V4 Pro lands in the open-weights frontier tier alongside Kimi K2.6, MiMo-V2.5-Pro, and another open-weights model already live on 0G's Private Computer: GLM-5.1. The band includes proprietary names like Claude Opus 4.7 Max. What changes today is how you reach it.

TeeML and TeeTLS, in one section

Two attestation primitives, different scopes, both verifiable on chain.

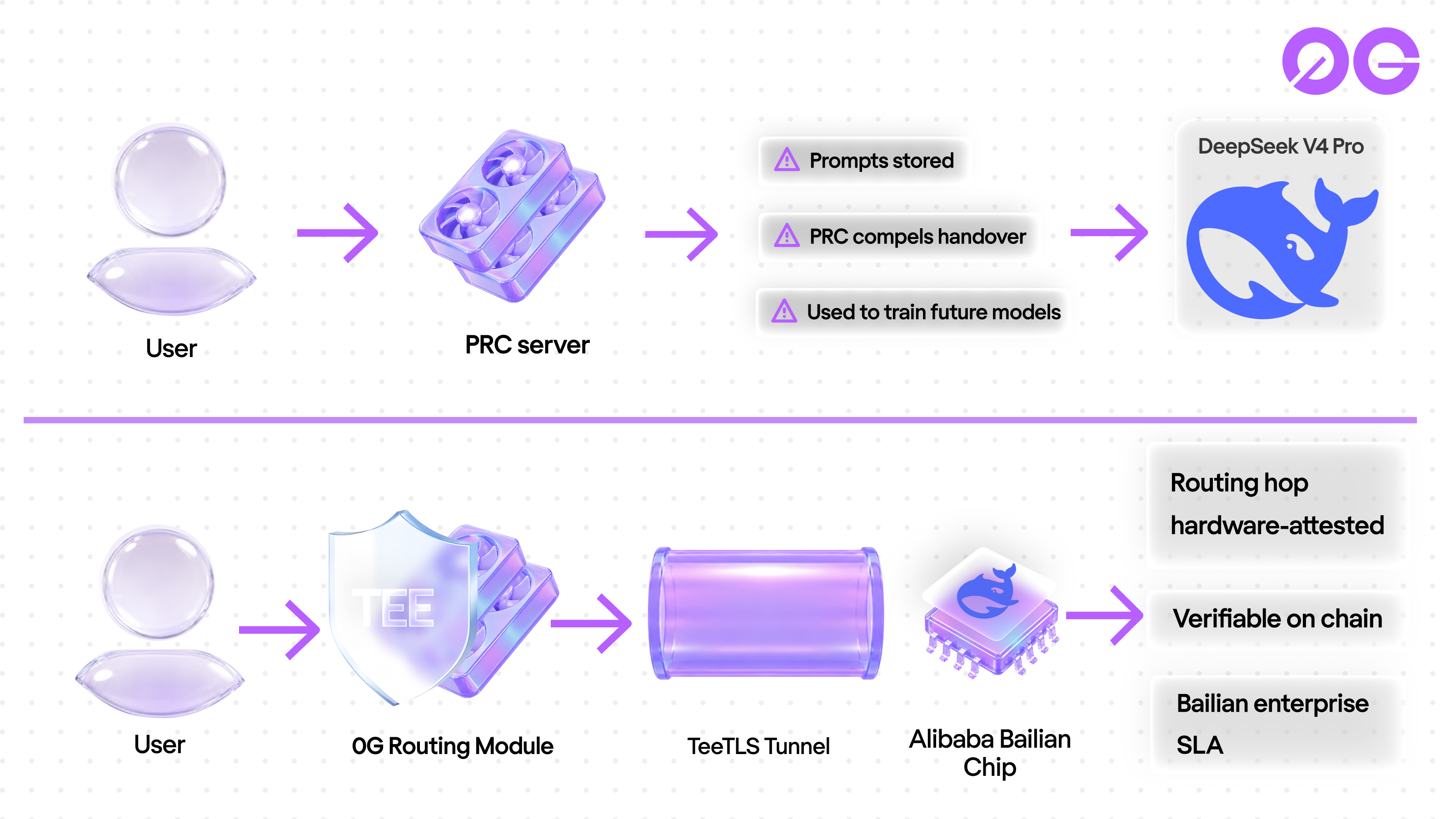

TeeML attests the inference itself. The model runs inside a 0G-operated TEE-sealed enclave; the operator cannot read the prompt. This is 0GM-1.0.

TeeTLS attests the routing path, not the inference. The TLS handshake from the routing layer to the upstream provider runs inside a TEE. Hardware proves the routing cannot be intercepted or silently redirected. Inference happens at the upstream. This is DeepSeek V4 Pro; the upstream is Alibaba Bailian, hosting DeepSeek's open weights.

A smaller-scope guarantee than 0GM-1.0's TeeML, and a different shape. The right one for hosting a third-party frontier model honestly: we do not claim to host DeepSeek's inference; we attest the path your prompt takes to Bailian.

What 0G adds on top of DeepSeek V4 Pro

DeepSeek's direct API ships V4 Pro through PRC servers. Prompts retained as long as the account exists, used to train future versions. NIST CAISI flagged the data exposure in May 2026; Italy, Australia, Taiwan, and South Korea restricted DeepSeek on government devices.

The 0G deployment routes through TeeTLS to Bailian's enterprise API. Every prompt has onchain proof of which upstream it was sent to and which TLS handshake protected the transport. The inference still happens at Bailian under Bailian's enterprise terms, not under DeepSeek's consumer retention policy. You no longer trust by policy alone; you trust by verifiable transport plus a different SLA on the other end.

For workloads that legally cannot land on PRC infrastructure, this is the meaningful upgrade. For workloads that need full enclave-bound inference where no third-party infra touches the prompt, 0GM-1.0 remains the only choice on pc.0g.ai.

Build with it

Existing client code that targets DeepSeek's API works against the 0G deployment with one URL change. The 0G Router normalizes requests and responses across providers; the endpoint is a single, public Router URL.

from openai import OpenAI

client = OpenAI(

base_url='https://router-api.0g.ai/v1',

api_key='app-sk-<YOUR_KEY>',

)

response = client.chat.completions.create(

model='deepseek-v4-pro',

messages=[{'role': 'user', 'content': 'Hello'}],

)

print(response.choices[0].message.content)

Router Mode picks between 0GM-1.0 and DeepSeek V4 Pro automatically based on the workload. Advanced Mode hands the choice to the builder. Tool calling works end-to-end: send an OpenAI tools=[...] payload and the response returns a proper tool_calls array on the message. To request onchain TEE attestation in the trace, set verify_tee: true in the request body; the response includes a tee_verified field you can verify against the provider's attestation on chain. For latency-aware routing, pass provider: {"sort": "latency", "allow_fallbacks": true}.

Pricing in $0G

USD-denominated, hourly FX-pegged to $0G, settled on 0G Chain. Input $2.10 per million tokens, output $4.20 per million. Above DeepSeek's promotional direct rate ($0.435 / $0.87, valid through May 31 2026) and roughly in line with the regular rate after the promo. The premium buys verifiable routing on top of Bailian's enterprise terms; for the data shapes where the direct API's retention policy is a non-starter, that is the reason the deployment exists.

Frequently asked questions

Does 0G host DeepSeek V4 Pro's inference?

No. Alibaba Bailian's enterprise API hosts the inference. 0G's contribution is a TeeTLS-attested routing layer that proves on chain which upstream your prompt was sent to and how the transport was protected.

What is the difference between TeeML and TeeTLS?

TeeML attests the inference inside a 0G-operated TEE enclave (used by 0GM-1.0). TeeTLS attests the transport layer between the routing provider and the upstream model API (used by DeepSeek V4 Pro). Different scopes, both verifiable on chain.

Why pay a premium over DeepSeek's direct API?

For teams whose data cannot legally land on PRC infrastructure under DeepSeek's retention policy (regulated industries, proprietary code, agent stacks handling user PII), the direct API is not an option regardless of price. The TeeTLS-routed path through Bailian gives you Bailian's enterprise terms plus cryptographic proof of the routing path.

Which tier should I use, 0GM-1.0 or DeepSeek V4 Pro?

0GM-1.0 (TeeML, sovereign) for agentic coding and any workload that legally needs inference inside a 0G-operated TEE enclave. DeepSeek V4 Pro (TeeTLS, verifiable routing) for frontier generalist reasoning where you want top-tier model quality and onchain proof of routing and can accept Bailian as the inference host. Router Mode picks automatically if you do not want to choose.

Where do I get a key?

Visit pc.0g.ai, connect your wallet, transfer at least 5 0G to the DeepSeek V4 Pro provider, generate an app-sk-* key from the API Reference page. Plug the key into your OpenAI SDK with base_url='https://router-api.0g.ai/v1' (the same Router endpoint serves every model on the Private Computer).

Build on 0G

- Try DeepSeek V4 Pro right now: pc.0g.ai

- Pull the open weights: HuggingFace

- Build on the full 0G stack: docs.0g.ai

- Follow @0G_labs for the rest of the Sealed Inference rollout

Sources:

- HuggingFace: deepseek-ai/DeepSeek-V4-Pro (model card, spec, license)

- Artificial Analysis Intelligence Index, May 2026

- DeepSeek Privacy Policy

- NIST CAISI evaluation of DeepSeek V4 Pro, May 2026

- 0G Labs: 0GM-1.0 launch, May 13 2026 (companion piece, sovereign tier)