DiLoCoX: training a 107B model across decentralized nodes

NVIDIA's CEO just validated decentralized AI training on a global stage. 0G published the research paper nine months ago.

Last week, Jensen Huang appeared on the All-In Podcast and said something that made TAO jump 17% overnight: decentralized AI training works.

Bittensor's community celebrated. Their Covenant-72B model, trained by 70+ contributors across Subnet 3, was the proof point Chamath Palihapitiya brought up in the conversation. Grayscale filed a TAO ETF. Headlines ran from Motley Fool to CryptoTimes.

Here's what those headlines missed: 0G trained a 107 billion parameter model on standard 1 Gbps network connections nine months before any of that happened.

The framework is called DiLoCoX. The arXiv paper went up in June 2025. It trained a modified Qwen1.5-107B across 160 GPUs spread over 20 nodes, achieved 357x better communication efficiency than standard AllReduce, and it did it on bandwidth you'd find in a typical office building.

0G published this research nine months before the current wave of headlines. The framework has been in development since before the market decided decentralized training was a real category.

What DiLoCoX actually solves

Training large AI models is an engineering problem, not a compute problem. The GPUs are out there. What kills you is the communication between them.

Standard distributed training uses AllReduce: every node sends its entire gradient to every other node after each training step. This works on NVIDIA's DGX clusters with 400 Gbps InfiniBand connections. On a regular 1 Gbps internet link, the math breaks down. Communication takes so long that your GPUs sit idle waiting for data to arrive.

DiLoCoX (Distributed Low-Communication Exchange) was built to solve exactly this bottleneck. The framework combines four techniques that, together, make large-scale training practical on standard network connections.

Pipeline parallelism across nodes. Instead of fitting the entire model on each node, DiLoCoX splits the model using PP=8 with DP=2 per cluster. While one node computes forward pass results, the next is already processing the previous batch. Computation and communication happen simultaneously.

Dual optimizer policy. Each node runs local SGD updates between global synchronization steps. A dual optimizer setup keeps these local updates aligned with the global model trajectory, preventing nodes from drifting apart during independent training.

One-step-delay overlap. Normally, nodes stop training while they wait for the latest global gradient. DiLoCoX keeps training using the previous step's gradient while the current one is being communicated. The paper proves mathematically that this delay causes negligible convergence impact when local training steps stay within bounds.

Adaptive gradient compression. Before sending gradients across the network, DiLoCoX compresses them 1,000x using low-rank decomposition (rank 2,048) combined with Int4 quantization. As training progresses and gradient information concentrates in lower-dimensional subspaces, the compression adapts automatically, reducing rank over time.

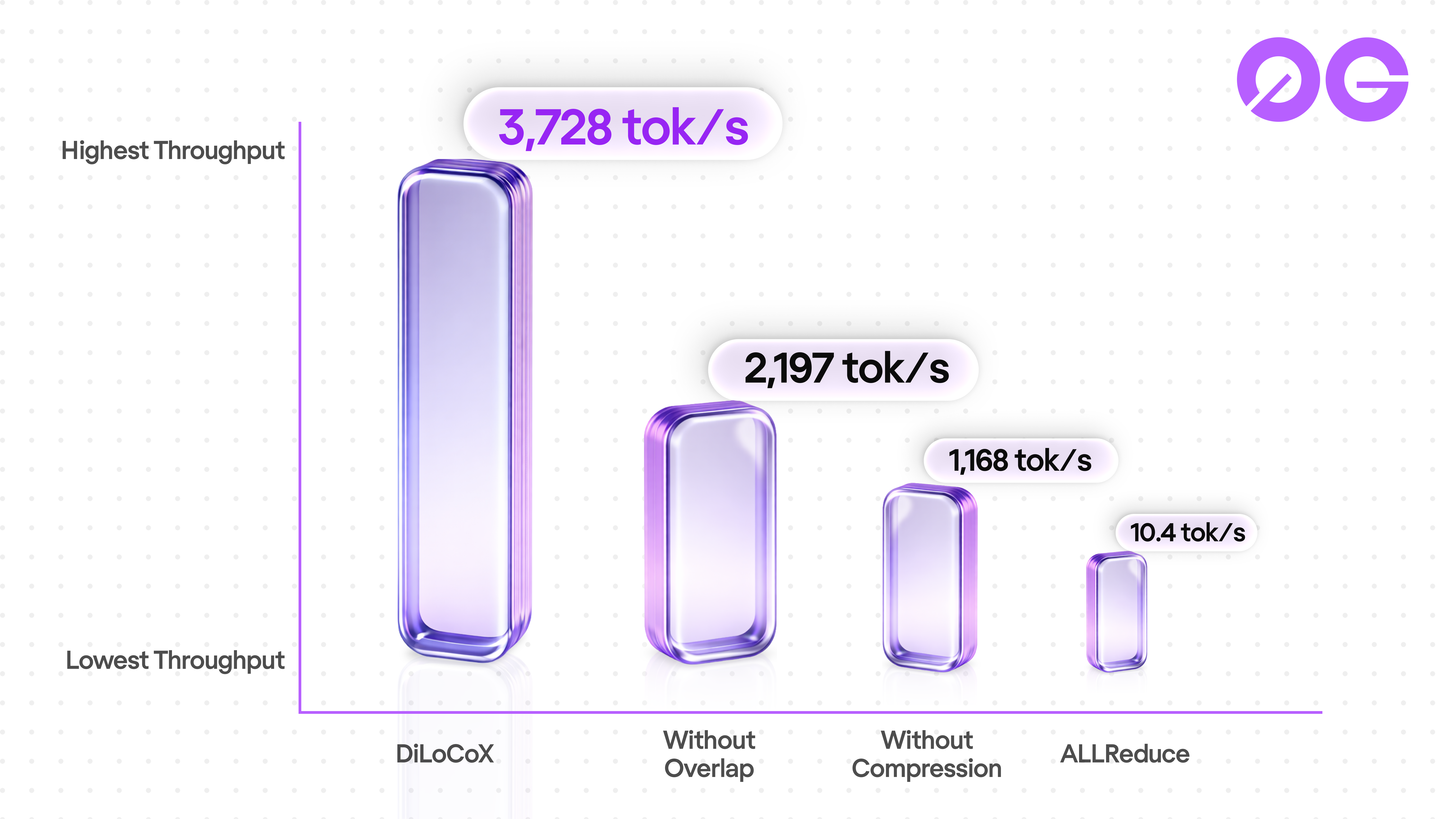

The result: 3,728 tokens per second versus AllReduce's 10.4 tokens per second on the same hardware and network conditions. That is the 357x speedup.

The 107B training run

The DiLoCoX paper tested the framework at multiple scales. The headline result is a modified Qwen1.5 architecture with 107 billion parameters, reduced from 80 to 78 layers for GPU memory optimization.

The training setup:

- 20 nodes, each with 8 NVIDIA A800-40G GPUs (160 total)

- Inter-node bandwidth: 1 Gbps (simulated via Linux traffic control)

- Dataset: WikiText-103

- Training steps: 4,000

- Developed in collaboration with China Mobile

The ablation study tells the real story:

| Configuration | Final loss | Throughput (tok/s) |

|---|---|---|

| AllReduce (baseline) | 3.90 | 10.4 |

| DiLoCoX (full framework) | 4.20 | 3,728 |

| Without one-step-delay overlap | 4.15 | 2,197 |

| Without gradient compression | 4.02 | 1,168 |

| CocktailSGD | 5.23 | N/A |

The trade-off is visible in the data. DiLoCoX's full framework has a 7.7% higher loss than AllReduce but runs 357x faster. Remove the one-step-delay overlap and you get slightly better convergence (4.15 loss) but lose 40% of the throughput. Remove compression entirely and loss improves to 4.02 but throughput drops to 1,168 tok/s.

CocktailSGD, another low-communication method, reached loss 5.23 on the same setup. OpenDiLoCo cannot even run at 107B because it lacks model parallelism support.

Two things worth being direct about: the 4,000 training steps on WikiText-103 is a proof-of-concept run, not production-scale training. And the 1 Gbps bandwidth was simulated using Linux tc, not tested on actual commodity internet. These are framework validation numbers. The point is that DiLoCoX scales to 107B while maintaining reasonable convergence, something no other decentralized training method has demonstrated at this parameter count.

Where decentralized training stands

Jensen Huang's comments didn't come out of nowhere. The past six months have produced real results in decentralized AI training.

Bittensor's Covenant-72B deserves credit. Training a 72 billion parameter model with 70+ independent contributors across commodity hardware is a genuine milestone. The model scored 67.1 on MMLU, outperforming Meta's LLaMA-2-70B on that specific benchmark (arXiv:2603.08163). The community proved that permissionless contributors can coordinate large-scale training.

The conversation shouldn't stop at one model from one subnet, though.

| Dimension | DiLoCoX (0G) | Covenant-72B (Bittensor) |

|---|---|---|

| Parameters | 107B | 72B |

| Scope | General training framework | Single model training run |

| Communication efficiency | 357x vs AllReduce | 146x compression (SparseLoCo) |

| arXiv paper | June 2025 | March 2026 |

| Full stack | Training + Inference + Storage + DA + Chain | Training subnet on Bittensor network |

| Verification | TEE-based cryptographic proof | Economic incentive alignment |

The comparison highlights a fundamental difference in approach. Covenant is a model. DiLoCoX is a framework. Covenant demonstrated that decentralized training can produce a working model you can download from HuggingFace today. DiLoCoX demonstrated that a training framework can scale to 107B parameters while keeping communication costs manageable on standard bandwidth.

One produces a model. The other produces infrastructure that can train any model.

The verification question

Training an AI model across distributed nodes introduces a problem that centralized clusters don't have: how do you know every node is doing honest work?

Bittensor uses economic incentive alignment. Nodes that produce bad gradients lose stake. The system assumes rational actors will behave correctly because cheating is expensive.

0G takes a different path. Every compute operation, including training contributions, can be verified through Trusted Execution Environments (TEEs). The hardware itself guarantees that the computation happened correctly, without relying on economic assumptions about participant behavior.

This matters more as the stakes get higher. When training runs cost millions in compute and the resulting model handles financial transactions or medical decisions, "the incentives should keep people honest" is a weaker guarantee than "the hardware proved it ran correctly."

Jake Salerno, 0G's VP of GTM, will expand on this at EthCC Cannes on April 1 in his keynote: "Why Verification Should Be a First-Class Citizen in AI." The argument: as AI models grow more powerful and widely deployed, the integrity of their training process becomes a security question, not just an engineering one.

A deeper analysis of verification approaches for decentralized AI training follows later this week on this blog.

What comes next

The DiLoCoX paper proved the framework works at 107B scale. The next phase is production.

0G is retraining the model with updated data, extended training runs, and open community participation. When complete, the weights and checkpoints will be released publicly.

The full 0G stack makes this jump from research paper to production infrastructure possible:

- 0G Compute provides the decentralized GPU marketplace where training jobs run with TEE verification

- 0G Storage handles training data, model checkpoints, and gradient logs at 30+ MB/s throughput

- 0G DA ensures data availability for training coordination, 50,000x faster and 100x cheaper than Ethereum's DA layer

- 0G Chain settles training contributions, staking, and verification proofs onchain

No other project ships all four layers. Bittensor runs training on its subnet but relies on external infrastructure for storage, settlement, and data availability. Centralized providers bundle everything but charge centralized prices and require trusting a single operator.

DiLoCoX is the training layer. The rest of the stack is what makes it usable in production.

Frequently asked questions

What is DiLoCoX?

DiLoCoX (Distributed Low-Communication Exchange) is a training framework for large AI models across distributed nodes connected by standard internet. It combines pipeline parallelism, dual optimizer policy, one-step-delay overlap, and adaptive gradient compression to achieve 357x communication speedup over standard AllReduce at the 107B parameter scale.

How does DiLoCoX compare to Bittensor's Covenant-72B?

They solve different problems. Covenant is a trained model (72B parameters, MMLU 67.1) built through permissionless community contribution. DiLoCoX is a training framework that demonstrated 107B parameter scale with 357x communication efficiency. Both advance decentralized AI training from different angles.

Is the 107B model available to download?

Not yet. The original training run was a framework validation on WikiText-103. 0G is retraining with extended data and will release weights and checkpoints publicly when complete.

What does 357x communication speedup mean?

Standard distributed training (AllReduce) achieves about 10.4 tokens per second for a 107B model on 1 Gbps bandwidth because nodes spend most of their time transmitting gradients. DiLoCoX achieves 3,728 tokens per second by compressing gradients 1,000x and overlapping communication with computation, making the network bottleneck nearly invisible to the training process.

How does 0G verify that decentralized training is done correctly?

0G uses Trusted Execution Environments (TEEs) to verify compute operations at the hardware level. This provides cryptographic proof that each node performed the correct computation, rather than relying solely on economic incentives to keep participants honest.

Build on 0G

- Read the DiLoCoX paper: arXiv:2506.21263

- Read the original announcement: Beyond Centralized Limits

- Explore the 0G stack: 0g.ai

- Start building: 0G Documentation

- Follow @0G_labs for the retraining announcement

Sources:

- DiLoCoX: A Low-Communication Large-Scale Training Framework (Qi et al., arXiv, June 2025)

- 0G Blog: First Distributed 100B+ Parameter AI Model (0G Labs)

- Covenant-72B arXiv paper (Bittensor Subnet 3, March 2026)

- Covenant-72B model weights (HuggingFace, Apache 2.0)

- Bittensor TAO Jumps 17% as NVIDIA CEO Praises Decentralized AI (CryptoTimes, March 2026)

- Bittensor Subnets Hit $550M Valuation (Blockonomi, March 2026)

- 0G Storage vs Filecoin and Arweave (0G Blog, March 2026)

- Geth to Reth Validator Migration (0G Blog, March 2026)